Last week I, along with students in my “AI and Advocacy” course at Stony Brook University, released a new report entitled “AI and Advocacy: Maximizing Potential, Minimizing Risk.” The report addresses any advocate who is considering using AI in their work. We argue that AI can be useful for advocates, but they must be careful to center human judgment and avoid risks that could distract from their important work or even contribute to societal harms. You can download and read the whole report on the Stony Brook Academic Commons.

The report identifies three major opportunities and accompanying risks, plus one strong recommendation:

- Advocates can use AI tools to increase outreach—at the risk of compromising trust

- Advocates can use AI to address complex issues—at the risk of partially hindering progress

- Advocates can use training data to advocate for their causes—at the risk of misinformation and bias

- Advocates must be proactive to assess privacy impacts before deploying AI tools

Below, I’ll expand on a few of these insights, plus talk a bit about the pedagogy of collaborative report-writing. I previously shared these on my LinkedIn page. Please share and help us reach folks interested in the intersection of AI and Advocacy!

AI, Advocacy, and Privacy

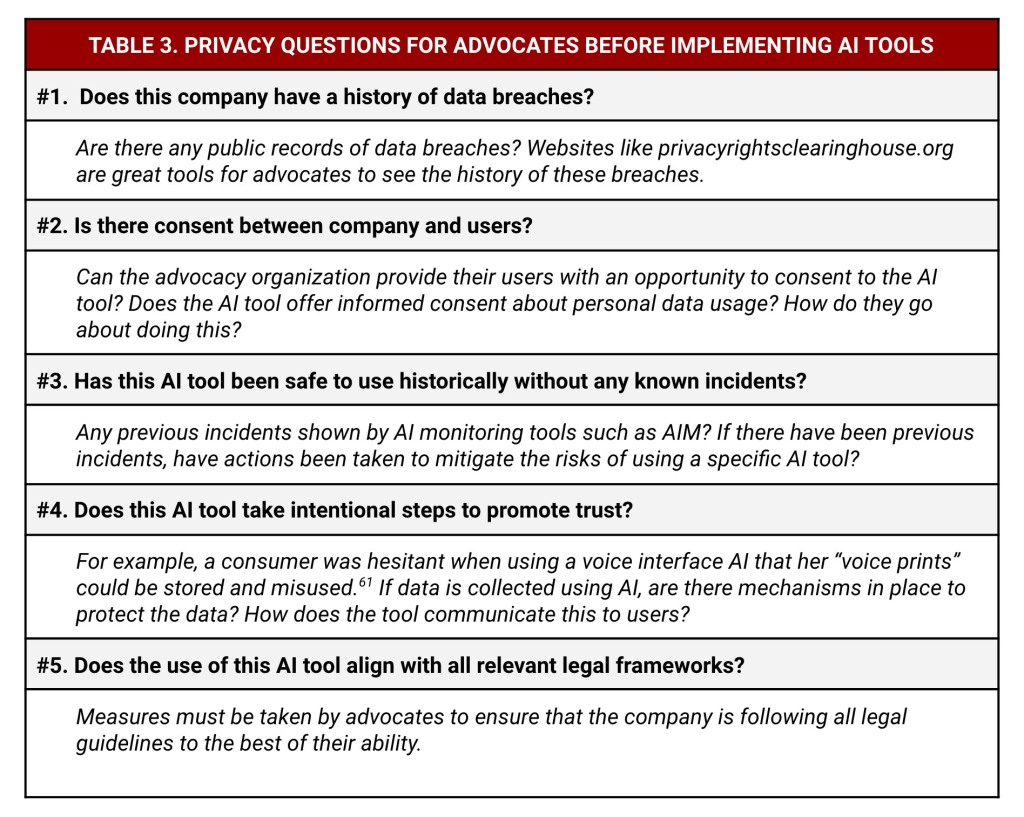

Advocates must be proactive to assess privacy impacts before deploying AI tools. We write that “it is imperative that the advocate remain mindful of what it entails when they allow their data — or the data of those they advocate for—to be used for artificial intelligence, as it may be used for ulterior purposes.” We made this convenient table to think through important questions to ask before using an AI tool.

AI and “Backtracking” on Advocacy Goals

One way AI tools can be used for social good is by dealing with incredible amounts of complexity on the world’s most challenging issues. AI tools could be put to use in helping analyze huge datasets about Climate Change and Wealth Inequality that challenge human decision makers and analysts. However, advocates must be wary of contradictions where AI use towards a cause actually contributes to the problem they are attempting to solve.

In our report, we call this “backtracking”—where steps taken to use AI toward progressive advocacy outcomes inadvertently contradict or even worsen the problem they aim to solve. From the economic sector, AI holds the promise of leveling the playing field, but can lead to a smaller field of employment due to layoffs. For environmental policymakers, generative AI algorithms could be transformative in addressing clean energy solutions, but use of these tools risks increasing carbon emissions.

When using an AI tool, advocates should consider:

❓ Could the material effects of the AI tool lead to misalignment with your mission, or backtracking on the problem you are trying to solve?

❓ Are you clearly explaining to your audiences about why you’ve chosen to use an AI tool?

Collaborative Report Writing Pedagogy

As an IDEA Fellow in Ethical AI at Stony Brook University, I’ve been given time to focus on developing research and teaching at that intersection. In this course, I wanted students to explore how AI technology could impact DEIA advocacy and actually contribute to conversations about advocate use of AI. Students lead the research and writing of the report, which you can read more about in the Methods appendix. My role was mostly to direct revisions, cut for length, and occasionally sentence-level rewriting for style.

Here’s two things I learned from the process:

🧑🎓 Report motivated students to engage in higher level learning, going from understanding viewpoints to defending arguments. As my friend Victoria Ledford, Ph.D. pointed out, the course ended up including multiple high impact teaching practices (HIPs): interactions with faculty and peers about substantive matters (semester-long work, in teams and in frequent meetings with me), opportunities to discover relevance of learning through real-world applications (applying research about ethical/persuasive AI to the advocacy sector), and public demonstration of competence (producing a report for a specific audience). These HIPs seemed to lead to some serious learning about AI, advocacy, and writing: I noticed that most of my students went from *maybe* knowing and caring about ChatGPT to being able to defend their views in conversations with professors across campus who attended our public AI Advocacy fair.

💼 Project gave students professional confidence, skills, and deliverables for their careers. Students can proudly display this report on their Resumes/CVs and LinkedIn profiles as undergraduate research. Even more importantly, it gave them intentional experience with teamwork and collaboration. Students were tasked with creating outlines, delegating work, and FREQUENTLY revising one another’s writing. They leave not only with a publication, but also examples they can use to demonstrate their ability to work in teams on complex tasks. One student even told me that they felt their confidence increase as they were being treated as worthy intellectual partners for the first time.

If you’re interested in learning more, please email/DM me, and you can consult some resources I’ve pasted below. I will say, this project took a lot of time and energy from both students and me—grading or revising group papers to a rubric is very different from giving feedback to collaborators on research! In the future, I would allocate more time for giving and responding to feedback and I’d consider implementing this assignment in a more advanced course.

Read our report: https://lnkd.in/g7XrXUuU

WRT302 syllabus: https://lnkd.in/eb8cGzhb

WRT302 schedule of readings: https://lnkd.in/eXXedfYv

HIPs: https://lnkd.in/eHyngHDM